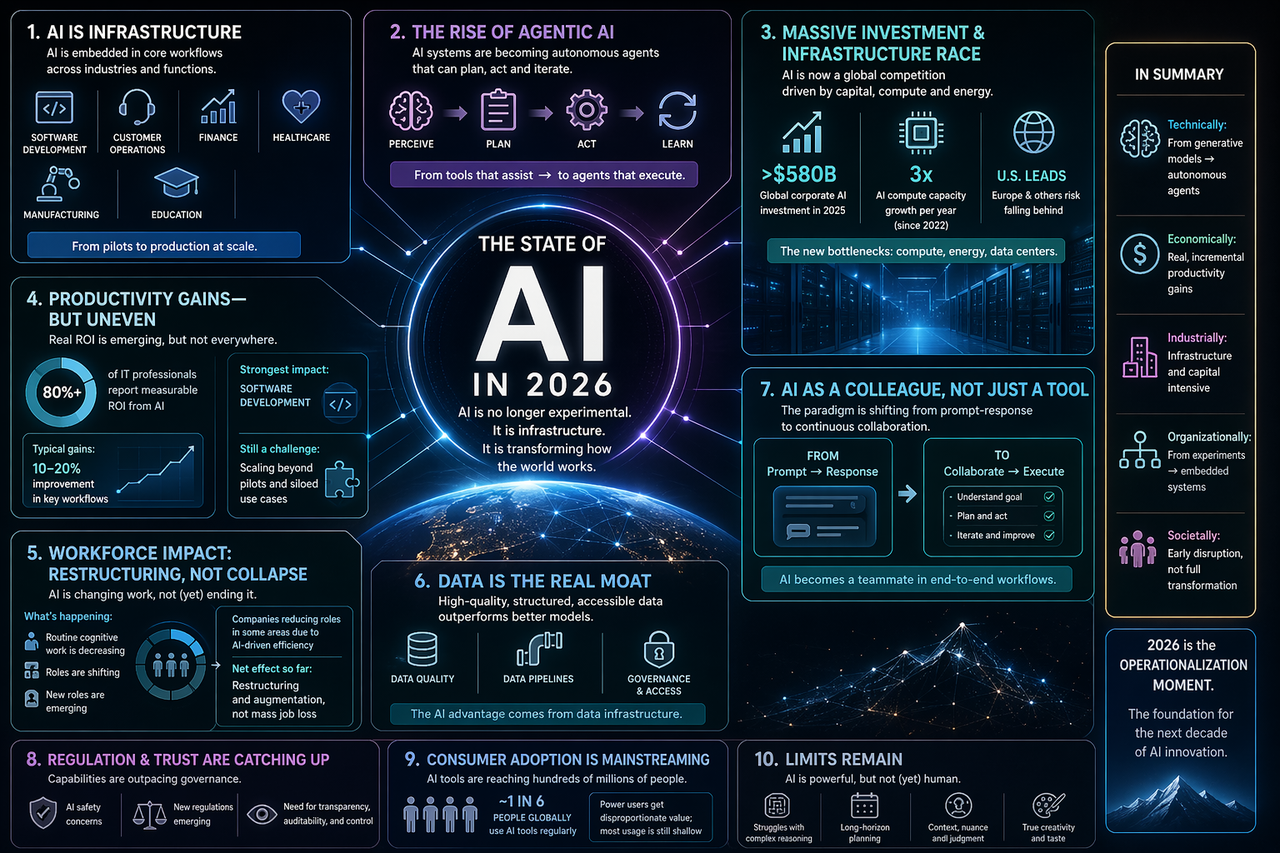

In 2026, the most useful way to think about AI is not as a category of products, nor even as a wave of clever models, but as infrastructure. That sounds less exciting than chatbots writing sonnets, but it is a much more important shift. Infrastructure is what quietly changes the default shape of work. Electricity did that. Cloud computing did that. Mobile internet did that. AI now belongs in that class: not because it has become magical, but because it has become embedded.

That distinction matters. The earlier public phase of AI was dominated by spectacle. People wanted to know whether models could pass an exam, beat a benchmark, generate a photorealistic image, or mimic a professional writing style. The current phase is less theatrical and more consequential. The interesting question is no longer “what can the model do in a demo?” It is “what happens when an organization starts relying on this every day?” Once that becomes the frame, the conversation changes immediately. Reliability matters more than surprise. Integration matters more than raw intelligence. Workflow fit matters more than novelty.

That is why 2026 feels different from the last few years. AI is increasingly judged by whether it can reduce cycle time, compress decision latency, remove repetitive coordination, or make a system more responsive under real constraints. In software teams, this means AI is less often treated as a code autocomplete toy and more often treated as a co-worker that drafts, reviews, proposes, tests, documents, and sometimes directly executes bounded tasks. In operations, it is not replacing the need for people, but it is changing the ratio between judgment and routine handling. In finance, customer support, logistics, healthcare administration, and internal knowledge work, the center of gravity has moved from experimentation toward operationalization.

The second large change is the rise of agentic behavior. “Agents” still means too many different things depending on who is speaking, but the direction is clear. AI systems are becoming less like static answer machines and more like process machines. They can interpret a goal, produce a plan, call tools, inspect results, revise their own output, and continue until a stopping condition is met. This does not mean they are autonomous in the science-fiction sense. It means they are increasingly useful in bounded loops where a human used to play the role of scheduler, copier, checker, and follow-up machine.

That is a bigger deal than it sounds. A great deal of white-collar work is not deep genius; it is glue work. It is moving information between systems, nudging a process forward, handling edge cases, summarizing state, and translating one team’s output into another team’s input. Agentic AI is steadily moving into that layer. The result is not dramatic job disappearance all at once, but a slow restructuring of roles. Teams that were organized around queue handling, formatting, or administrative mediation are being redefined. Individual contributors with good taste and domain knowledge become more leveraged. Managers are pushed toward clearer delegation because ambiguous operating environments produce worse AI outcomes.

At the same time, the economics of AI have become much more tangible. In 2026, nobody serious thinks this is just a software story. It is a capital story and an energy story. Behind every polished AI experience sits a stack of expensive realities: chips, data centers, network capacity, cooling, power availability, procurement timelines, model hosting costs, evaluation pipelines, governance overhead, and increasingly, organizational retraining. The bottleneck is no longer merely model capability. It is the entire supply chain of usable intelligence. That is why the geopolitics around AI now feel inseparable from industrial policy. Whoever controls compute, energy, talent concentration, and deployment discipline will shape the next decade far more than whoever wins a single benchmark.

This also explains why the competitive field looks uneven. The United States still leads in the combination of frontier model development, capital formation, hyperscale cloud capacity, and talent magnetism. Europe continues to produce strong research, excellent technical operators, and good regulation instincts, but too often struggles to convert that into deployment speed. Many firms outside the largest tech centers are now in the uncomfortable middle: they can access powerful models, but not necessarily the organizational or infrastructural muscle to turn that access into lasting advantage. The gap between “can use AI” and “can compound with AI” has become one of the defining business differences of the year.

Another important realization in 2026 is that data still matters more than many people hoped. The fantasy that superior models alone would erase bad systems has mostly collapsed. AI amplifies what an organization already is. If your data is fragmented, contradictory, politically contested, or inaccessible, AI will reveal that faster than any consultant. If your processes are poorly defined, AI will scale confusion. If your teams cannot agree on what success means, a model will not rescue you. But when data is clean enough, workflows are explicit enough, and ownership is clear enough, AI can feel astonishingly effective. That is why the real moat for many companies is no longer the model itself. It is the surrounding data infrastructure and institutional clarity.

Consumer adoption has also matured. Hundreds of millions of people now touch AI systems regularly, but usage is still shallower than the hype cycle implied. For many users, AI is not yet a daily operating environment so much as a situational amplifier: something they turn to for brainstorming, explanation, drafting, coding help, translation, or image generation. The strongest consumer shift is not constant dependence but lowered activation energy. People increasingly assume that if a task involves language, synthesis, or pattern shaping, an AI layer should probably be available. That expectation is subtle but powerful. Once users assume the layer belongs there, products without it begin to feel incomplete.

Regulation, trust, and social adaptation are finally catching up, though not in a neat or unified way. The conversation has matured beyond “should AI exist?” toward more practical questions: what must be auditable, who is accountable for model-mediated decisions, how should synthetic content be disclosed, what counts as acceptable delegation, and where should human override remain mandatory? None of this is solved. But the quality of the discussion is better than it was. Organizations now understand that trust is not a branding exercise layered on top of deployment. It is part of the system design itself.

If there is one sentence that best captures AI in 2026, it is this: the technology is no longer experimental, but its social form still is. We know enough now to see the contours. AI is becoming embedded in the ordinary machinery of production. It is making some people dramatically more effective, exposing the weakness of brittle institutions, and forcing companies to confront whether they are genuinely operational or just manually compensating for their own fragmentation. The main story is no longer whether AI works. It does. The harder and more interesting story is who can reorganize around that fact faster than everyone else.